We’ve survived the era of over-hyped, under-delivering AI hardware.

In 2024, devices like the Humane AI Pin and the Rabbit R1 were selling the idea that AI could navigate apps and services for you. They failed, mostly because they tried to reinvent the wheel.

Now, the March 2026 Pixel Drop has actually delivered on that promise, and it doesn’t require a weird orange box in your pocket.

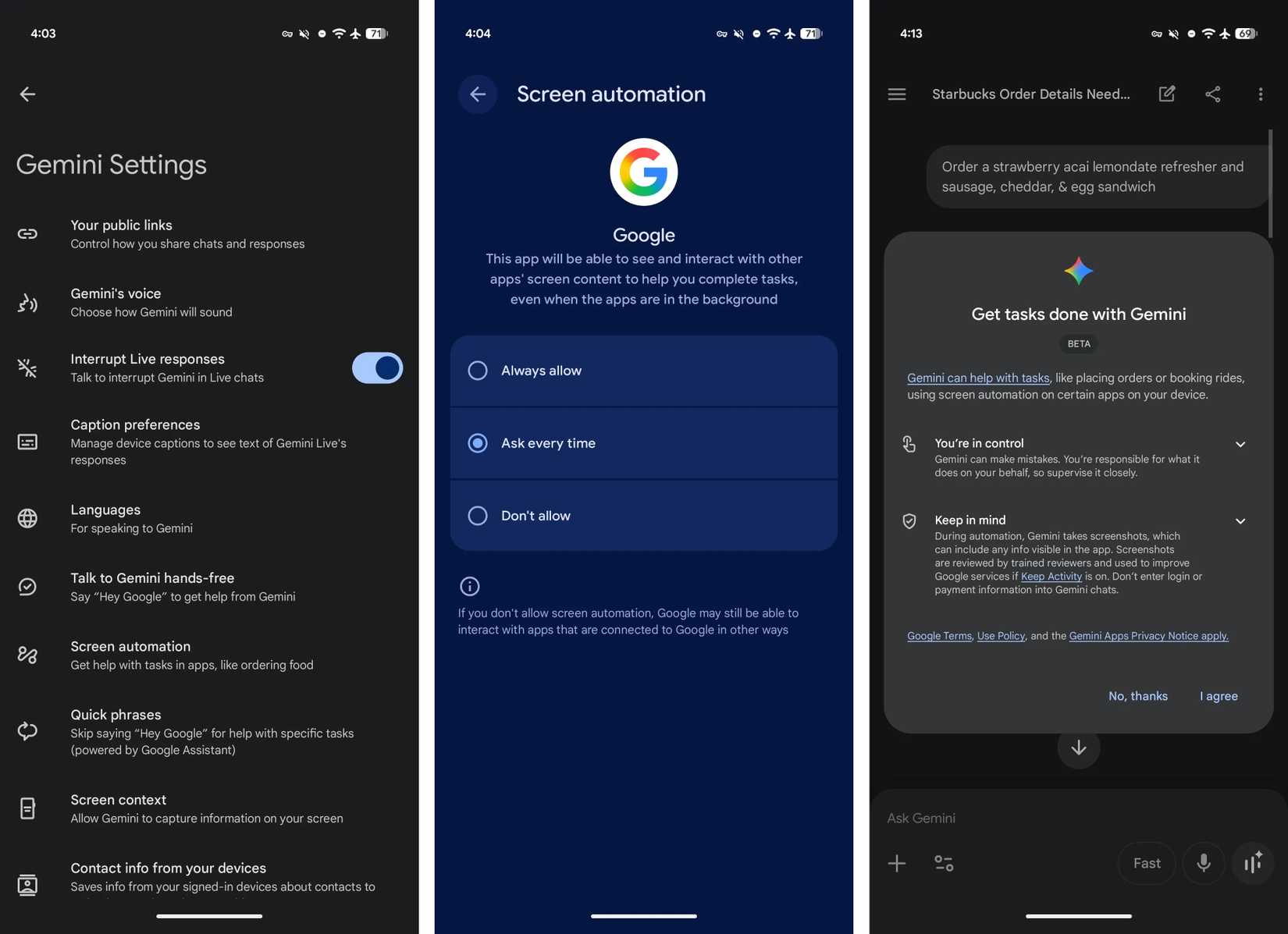

With Gemini now using visual reasoning to control third-party software on your screen, Google is rethinking how we interact with devices, and it’s one of the most spectacular and bizarre features I’ve ever seen.

Related

How Gemini screen automation works on Android

Related

How Gemini screen automation works on Android

Credit: Google

Credit: Google

Older assistants worked within strict API limits and controlled integrations. Gemini is doing something completely different this time.

With screen automation, Gemini reads what’s on your screen, picks out things like text fields, menus, and search bars, and interacts with them in real time just like you would.

You can say something like, “Order my usual Friday night pizza from DoorDash” or “Book an Uber to the airport,” and Gemini handles the rest, from adding items to checking out.

More importantly, Gemini does all of this without taking over your device. Everything runs inside a sandbox in the background.

Credit: 9to5Google

When automation saves time and when it creates new problems

Credit: 9to5Google

When automation saves time and when it creates new problems

Credit: Lucas Gouveia / Android Police | fizkes / Shutterstock

Credit: Lucas Gouveia / Android Police | fizkes / Shutterstock

There’s no question this tech is forward-looking, but it comes with some built-in issues.

The first thing that made me think was how changes to the UI could break how this system works.

Modern applications are not static. Developers constantly push A/B tests and redesign layouts.

A human adapts if the button moves from the lower-left corner to the upper-right corner. Will an AI agent adapt when an app updates its UI overnight?

What happens when agentic AI has to navigate through pop-ups, banners, and consent prompts? We barely notice them, but an AI has to interpret and deal with them.

If something goes wrong, the live view system gives you control to fix it. You can jump in, fix the issue, and let the AI resume. That works, but it undermines the whole hands-off experience.

At this stage, you’re still faster than Gemini. Knowing where everything is gives you the advantage. So what’s the point? Well, it’s accessibility and multitasking.

Asking my phone to reorder last night’s dinner while I’m writing an email is a small but meaningful quality-of-life upgrade.

And for people with motor accessibility challenges, voice control over complex UI interactions is a big leap in making technology more accessible.

What happens to ads when AI does the browsing for you? Credit: Lucas Gouveia / Android Police | tinhkhuong / Evgenia Vasileva / Shutterstock

Credit: Lucas Gouveia / Android Police | tinhkhuong / Evgenia Vasileva / Shutterstock

There’s an elephant in the room that Google isn’t really talking about yet. Apps are built to keep you engaged, because that’s how they generate revenue.

Be it a sponsored listing on DoorDash or a suggested product on Amazon, the system works only if you stay long enough to see what’s being pushed.

When Gemini runs things in a background window, that whole model starts to fall apart. An AI agent isn’t stopping to notice a sponsored listing.

This is where things get complicated. Google is building this future, but makes money from ads.

Will developers start designing against AI agents? Will we see AI-proof UIs and aggressive CAPTCHAs to force humans back in the loop? Time will tell.

How private is Gemini when it controls your apps? Credit: Lucas Gouveia / Android Police

Credit: Lucas Gouveia / Android Police

To use agentic Gemini, you need to grant it deep access to your device. Google’s response to privacy concerns is to compartmentalize the agent.

Since the AI runs in a virtual window, it’s theoretically isolated from the rest of the device, and the agent only sees what is happening within that specific session.

However, the data generated during that session — what you order, where you go, and how much you spend — is still processed by Google’s servers.

So if Google wants to, it can build an even more detailed profile of your daily life.

Access depends on your phone, region, and subscription

Right now, this beta feature is only available on high-end hardware like the Google Pixel 10 series and the Samsung Galaxy S26 series, and it’s limited to users in the United States and South Korea.

The company also introduced a tiered usage model, where the number of daily agentic requests depends on your Gemini subscription level.

I recently mentioned that Samsung’s Galaxy AI might start charging for more advanced features, and this looks like a step in that direction.

Plan

Screen Automation Requests (per day)

Gemini Basic (without a Google AI plan)

5

Google AI Plus

12

Google AI Pro

20

Google AI Ultra

120

Not perfect yet, but hard to ignoreRight now, agentic Gemini is still very much in a messy in-between stage. We are fundamentally forcing an AI to use interfaces built for human fingers, human eyes, and human attention spans.

There will be hiccups, confusing prompts, and situations where you’re better off just doing it yourself. But dismissing this as a gimmick would be a mistake.

This is the genesis of something much larger. Eventually, apps may drop the visual layer entirely and communicate with our agents instead.

Until things mature, watching Gemini move through existing software is an awkward but interesting glimpse of what’s ahead.

Comments (0)