Summary: Users turn to site-specific chatbots for quick answers, not a conversation. Design responses that are direct, scannable, and easy to expand when needed.

It's easy to assume that a friendly, conversational AI chatbot might help build customer relationships or make a brand feel more approachable. However, the reality is that users who land on your site with a question aren't looking for a conversation — they're looking for an answer.

In our study, participants often approached site chatbots much like search bars: they typed as little as possible, expected quick responses, and preferred answers they could scan quickly. A longer answer was not a better answer.

Users Type Short, Imperfect QueriesIn a recent study, 9 people with varying levels of digital and AI literacy interacted with 8 different site-specific AI chatbots. Each participant interacted with 2–3 bots and was given realistic tasks based on their backgrounds and interests.

Participants’ interactions were strikingly nonconversational. No one said “hello” to the bots, and very few used “please” or “thank you.”

When participants began their tasks in chat, they launched straight into their first question.

Example prompts:

Turo: Need a car for three people. Going to Orlando, FL, from Hampton, Georgia

Scouting America: What are the fees?

Home Depot: Do you sell pavers?

Mississippi Gov: What permits do I need for operating a small business in MS?

Some participants didn’t use full punctuation or correct grammar. And several participants submitted prompts with typos. Some participants noticed their typos after submitting their prompts and wondered whether the chatbot would understand them. They soon realized it didn’t matter.

For example, as a participant typed her query into Williams Sonoma’s chatbot, she noticed she had missed the word “how” at the front of her question. As she noticed her mistake (out loud), the chatbot returned the result she hoped for.

“Did I miss the word ‘how’? Oh! It understood me already!”

She continued chatting with the bot, composing prompts that had more typos but no longer worrying about them. This was a consistent trend: participants usually started with a full sentence with proper grammar and punctuation, but as they realized the bot was smart enough to understand them, their followup prompts grew shorter and more keyword-like as the conversation continued. One participant explained:

“I find once (...) [I] trust that it's a working bot and it is pretty smart, I will tend to use (...) shorter English and not worry so much about complete sentences.”

(Typing is effortful, which is why chat doesn’t always need to be the answer, nor is it the first thing users resort to on a site.)

Users got straight to the point — and they expected chat to do the same in its responses.

Are my AI chatbots actually helpful?Answer that question and create digital interactions of the future with Designing AI Experiences.

Cut Small Talk and FillerChattiness and sycophancy are easy traps for site chatbots to fall into — but the best ones avoid them.

When using Home Depot’s AI chatbot, one participant happily noticed the absence of this affectation that bothered him so much with genAI chatbots, like ChatGPT.

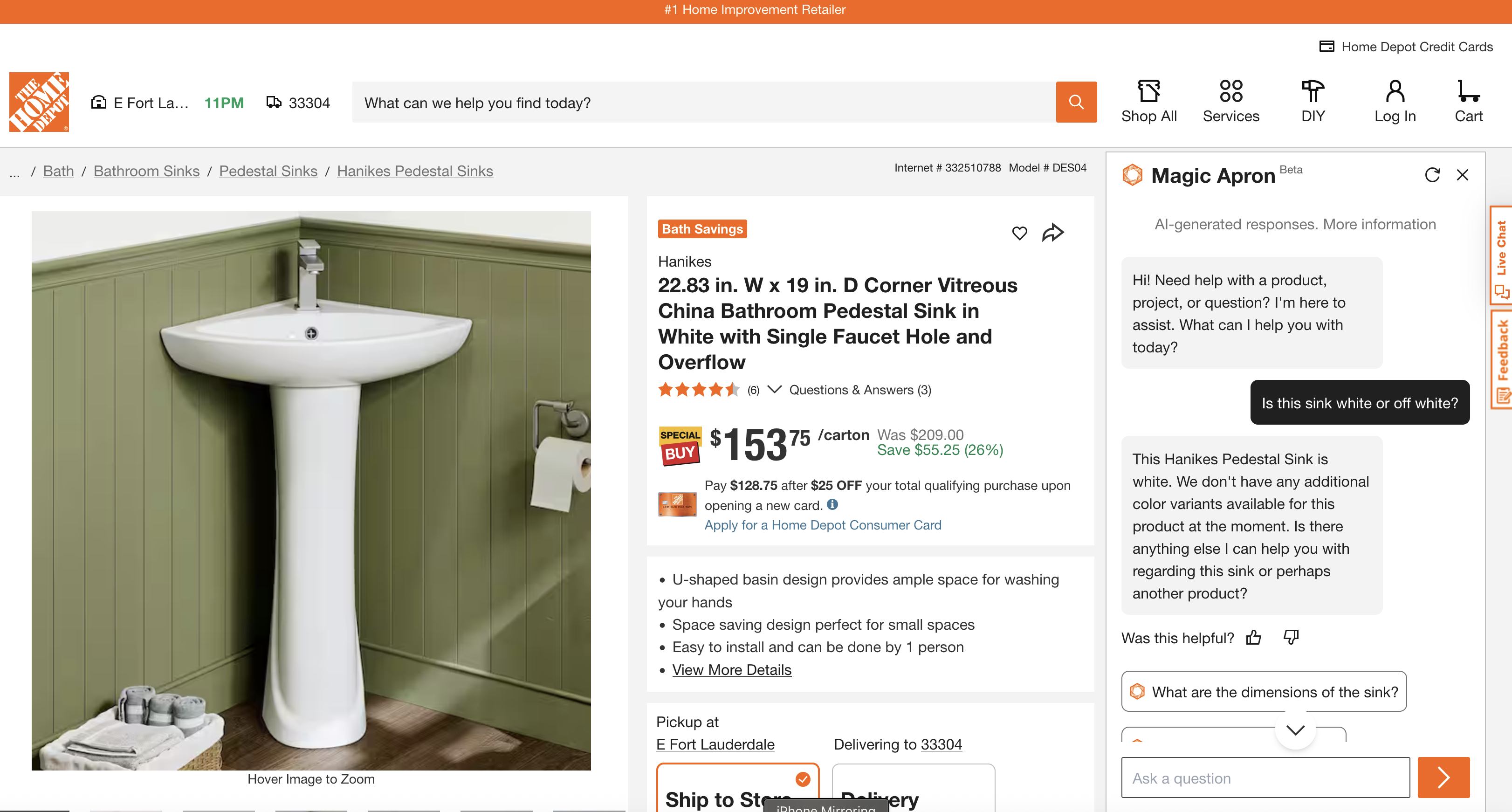

✅ Home Depot’s AI chatbot, Magic Apron, was appreciated for its direct answers

✅ Home Depot’s AI chatbot, Magic Apron, was appreciated for its direct answers

After using the chatbot to help him find relevant products for an upcoming DIY project, he reflected:

“I really like how the Home Depot chat is just giving me answers without any, like, ‘great question!’ and that kind of stuff, which drives me crazy with the LLMs (....) I view these as tools. I don't need to be pandered to (...) we don't need to talk and chat and have a cordial relationship. I just want to get the information.”

People want concise responses; a reply that includes pleasantries or sycophantic responses, like great question, only lengthens the response. But brevity alone isn't enough — how an answer is structured matters just as much as how short it is.

Make Answers Concise and Easy to ScanWe’ve written about this before; genAI needs to write for the web. The same best practices that apply to writing for the web also apply to site chatbots. If anything, it’s even more crucial to adhere to web-writing best practices, since the chat viewport is much smaller, so even a small paragraph can look like a wall of text.

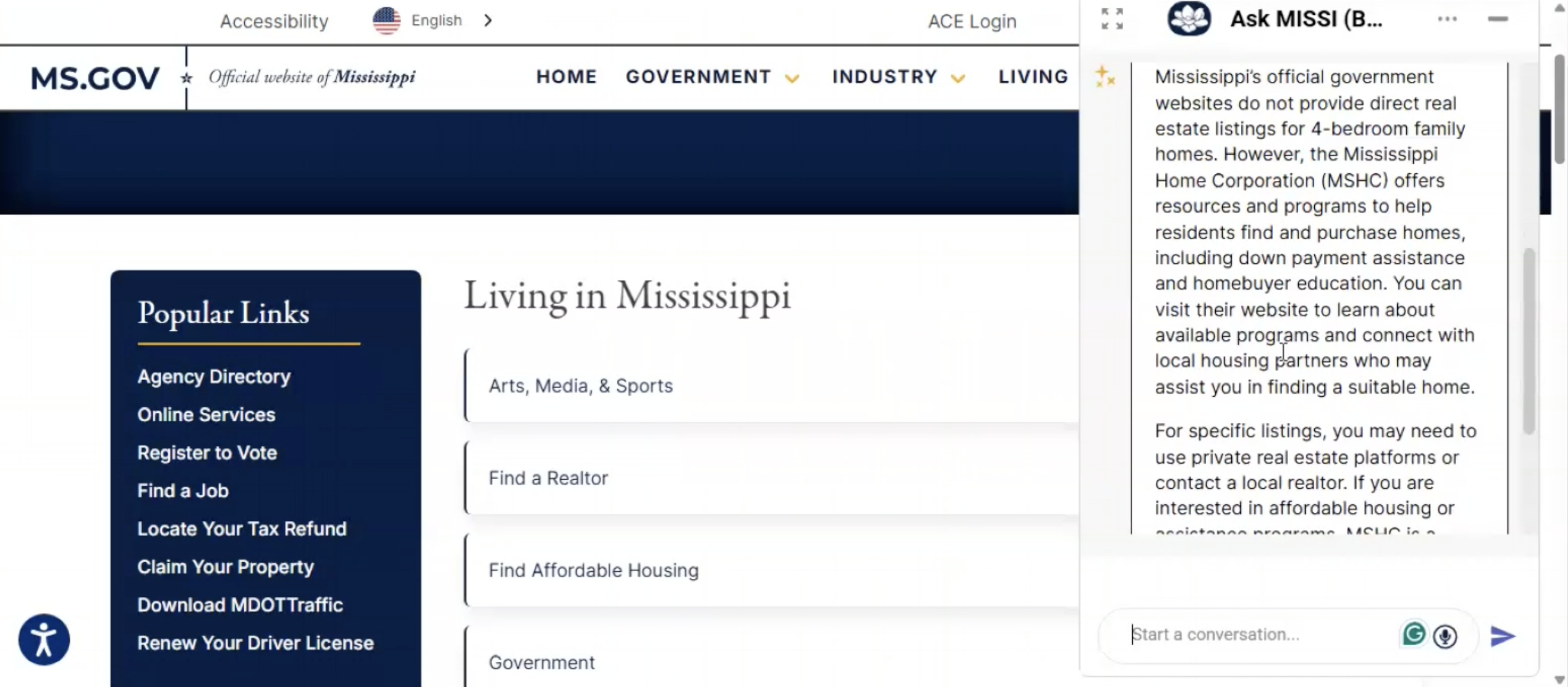

The Mississippi government’s AI chatbot was guilty of producing long paragraphs of text. When one of our participants used the chat to learn about moving to the state, she received a response that filled the entire chat viewport. The lack of concision and formatting put her off engaging further.

❌ Mississippi state government’s AI chatbot, Ask MISSI, provided wordy responses that filled the entire chat viewport and were not sufficiently formatted.

❌ Mississippi state government’s AI chatbot, Ask MISSI, provided wordy responses that filled the entire chat viewport and were not sufficiently formatted.

In addition to the long response, Ask MISSI also streamed its response (i.e., it displayed its answer incrementally as it was generated). As she watched the chat viewport fill up quickly, she told us, “The pouring in of information kind of made me feel overwhelmed.”

Streaming long responses can intensify the sense of overload, especially when the answer is already too dense to scan.

Another participant complained that the response was too long and lacked formatting.

“It's just too wordy. It's not chopped up (...) I don't like reading all of that. I prefer, like, bullet points. I prefer links. I prefer pictures. This just seems too exhausting for me. Like, it's just too much to read (....) visually, it just doesn't appeal to me the way it is, the way it's written like that, like it's laid out.”

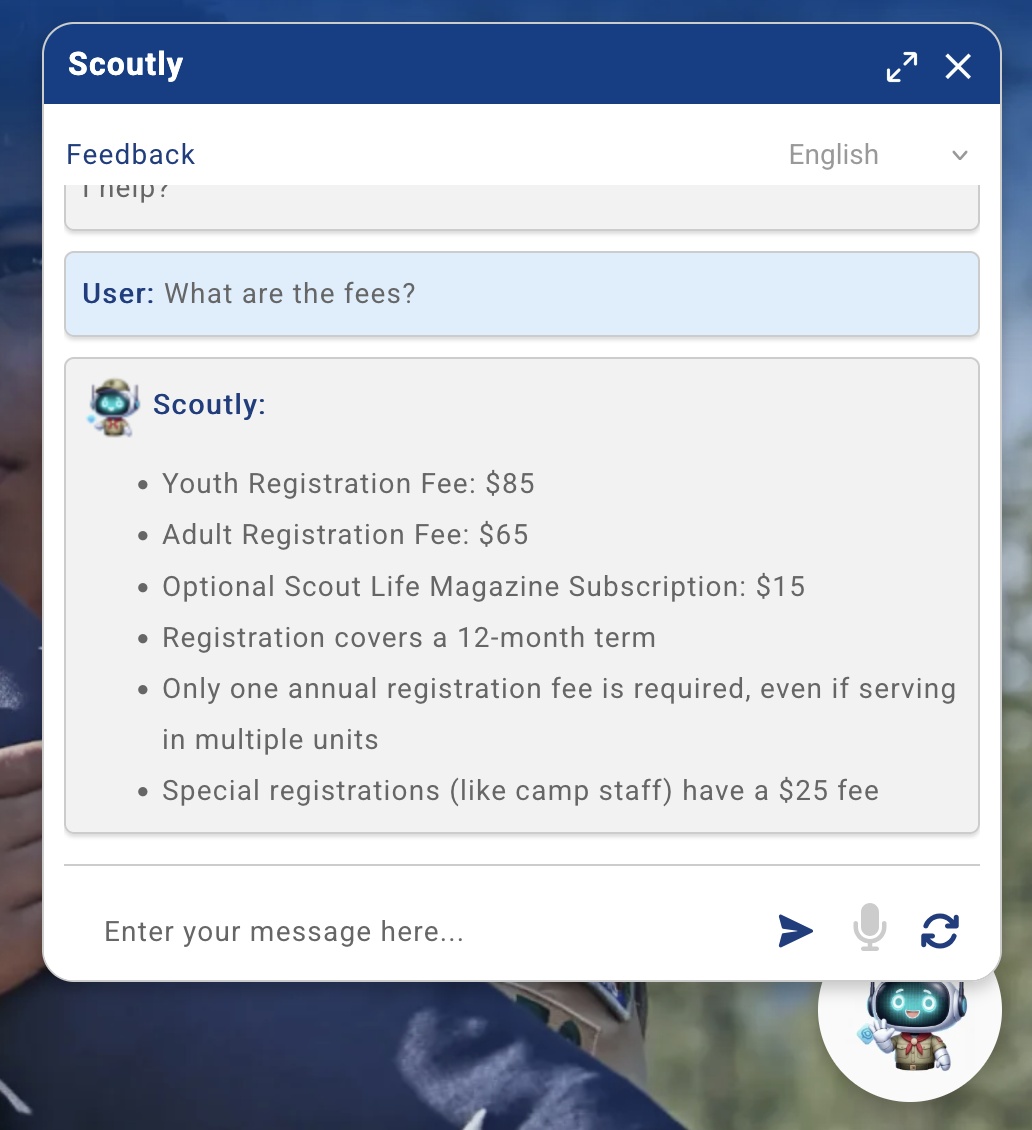

In contrast, the AI bot on Scouting America’s website, “Scoutly,” was appreciated for its concision and formatting. When one participant used the bot to learn about membership fees, he received a concise, helpful response. As he read it, he remarked:

“Okay, so that's nice. [It] just gave me the answer directly without too much preamble, and it gives me the fine print, but in a very readable way. Yep, this is a good answer. Informative and concise.”

✅ Scoutly, the AI bot on Scouting America’s site, provided concise, direct answers, without fluff.

✅ Scoutly, the AI bot on Scouting America’s site, provided concise, direct answers, without fluff.

Being concise does not mean omitting useful information. It means removing everything else. Designers can reduce the risk of overwhelming users by keeping sentences short and paragraphs to no more than 2–3 sentences. When responses must be long — for example, when a process involves many steps — configure AI chatbots to use content-formatting techniques like lists, bold text, headers, and white space.

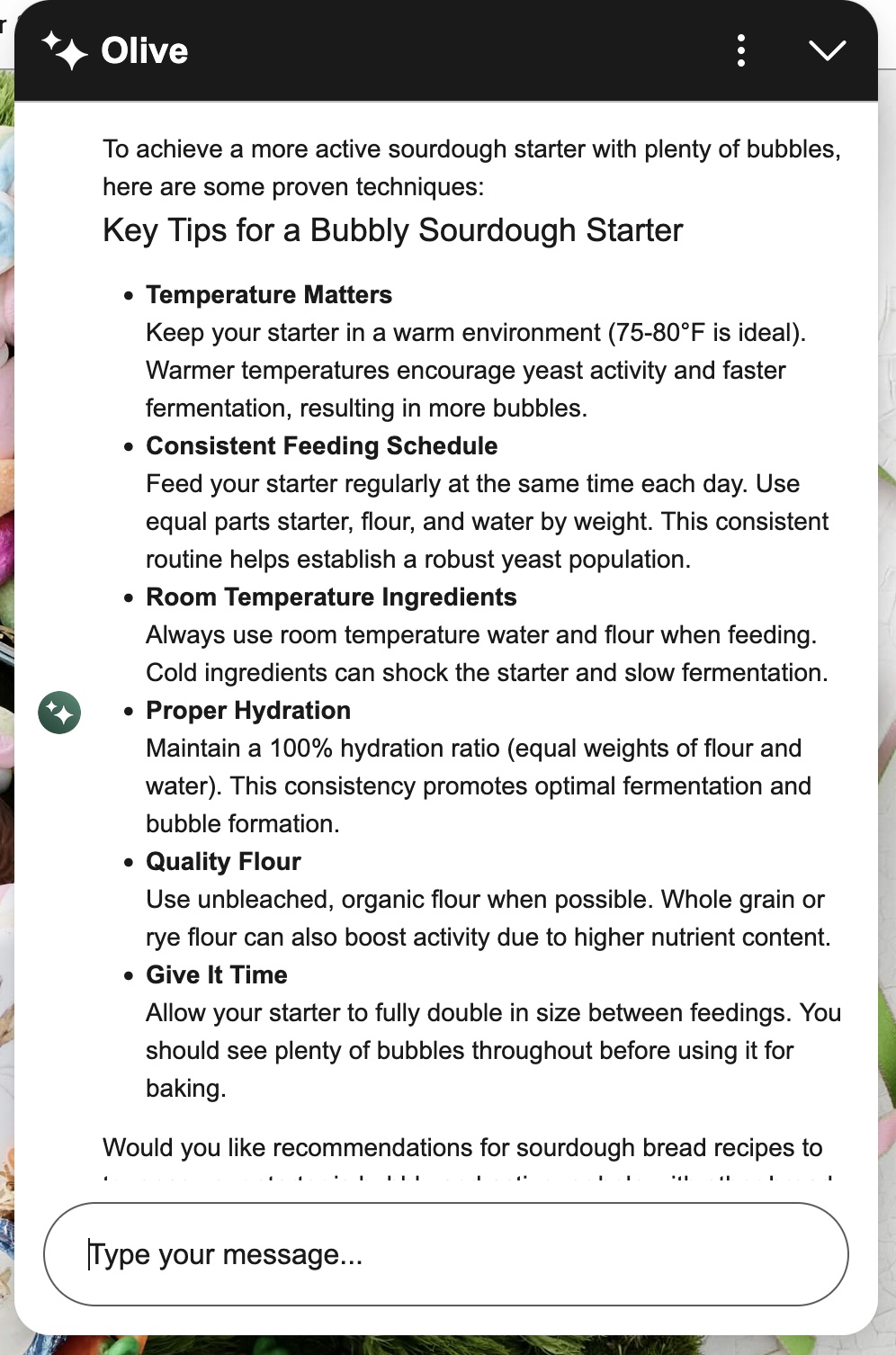

Williams Sonoma’s AI chatbot provided cooking and hosting tips. Given the nature of the requests, the responses were often long. However, participants did not seem to mind because the responses were well formatted. For example, one participant asked how to increase the number of bubbles in her sourdough starter and received a list of recommendations. As soon as she saw the response, she said:

“Oh, I love this (...) I love that they're bulleted, and it's not just like one big paragraph.”

✅ Williams Sonoma’s AI chat used content-formatting techniques to make long responses scannable.

✅ Williams Sonoma’s AI chat used content-formatting techniques to make long responses scannable.

Formatting helps, but even a well-structured response can overwhelm users if it provides too much information. The order in which information is presented matters, too.

Give Answers First; Provide Detail on DemandIn an attempt to be helpful, some of the chatbots we tested provided too much information and overwhelmed our participants.

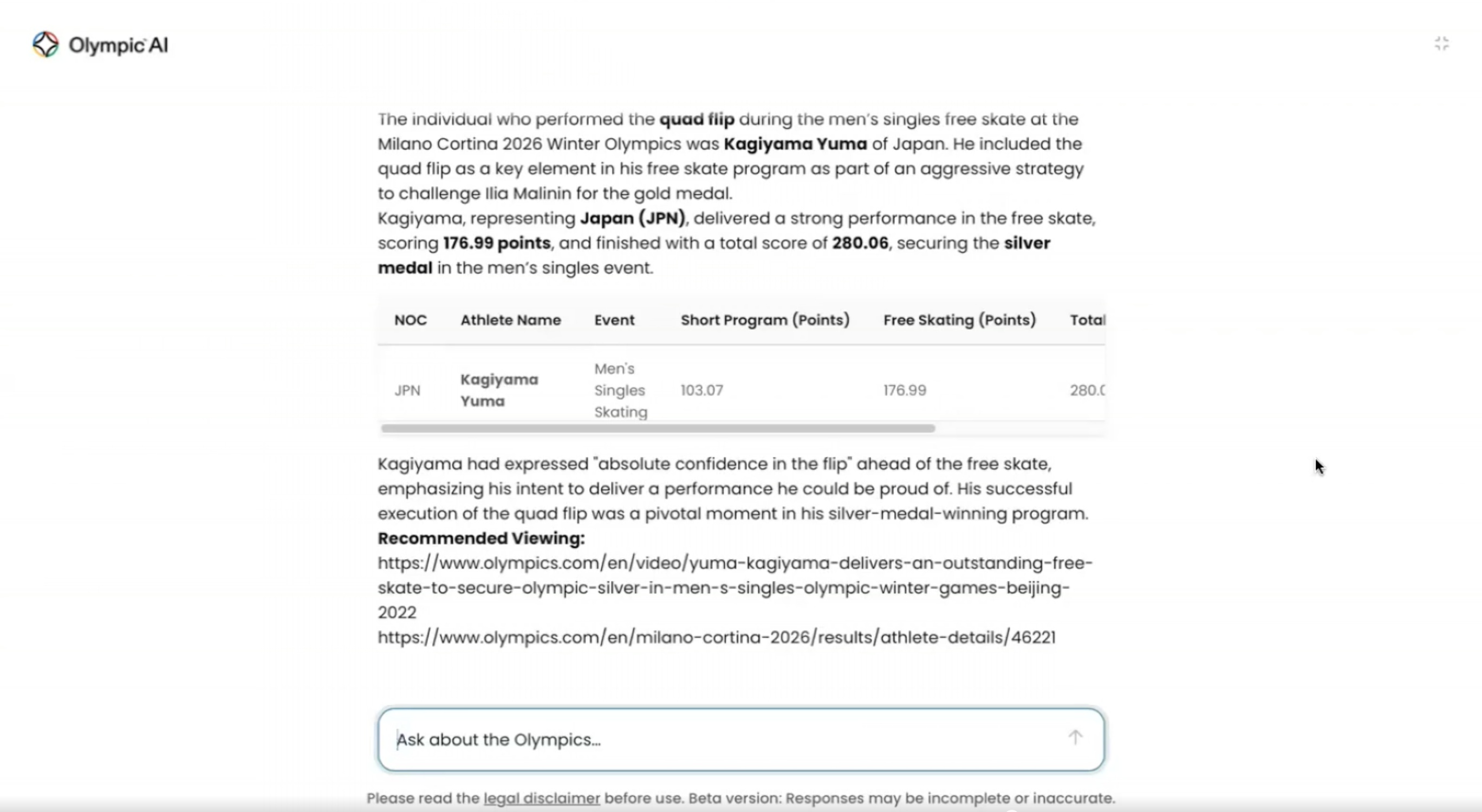

One participant, who had not been watching the Winter Olympics, had heard of a skater who had done a backflip on the ice. She wanted to know the skater's name. She was thinking of the U.S. figure skater Ilia Malinin. She typed into the AI chat feature on the Olympic Games site, "Who was the individual who did a flip?" The chatbot returned a response far beyond what she needed — scores, background details, and context she hadn't asked for. She just wanted a name.

“[it] probably gave me too much information (...) I just kind of wanted to know the guy's name, and then I guess I could ask more questions along those lines to get to, you know: what was his score? Show me the video.”

❌ The chatbot for the Olympic Games provided a very long answer, which our participant didn’t want to read.

❌ The chatbot for the Olympic Games provided a very long answer, which our participant didn’t want to read.

The participant compared the experience with how ChatGPT usually responds to her.

“What I do like about chat [ChatGPT] is chat kind of gives you the bullet points at first, and then you ask [for] more detail, but it doesn't overwhelm you, like that Olympic site.”

We also noted that because she had not specified what kind of flip, the chatbot had returned the wrong skater — Kagiyama Yuma, who had executed a quad flip, not the backflip she had in mind. A better response would have first asked a clarification question, and then, once the intent was clear, answered with just the skater's name and offered followup prompts such as See his score or Watch the video.

Clarification questions should be used sparingly because they introduce friction into the process of getting an answer. However, when a user asks an ambiguous question, it can be valuable to ask a quick, relevant clarification question to avoid giving the user the “wrong” answer.

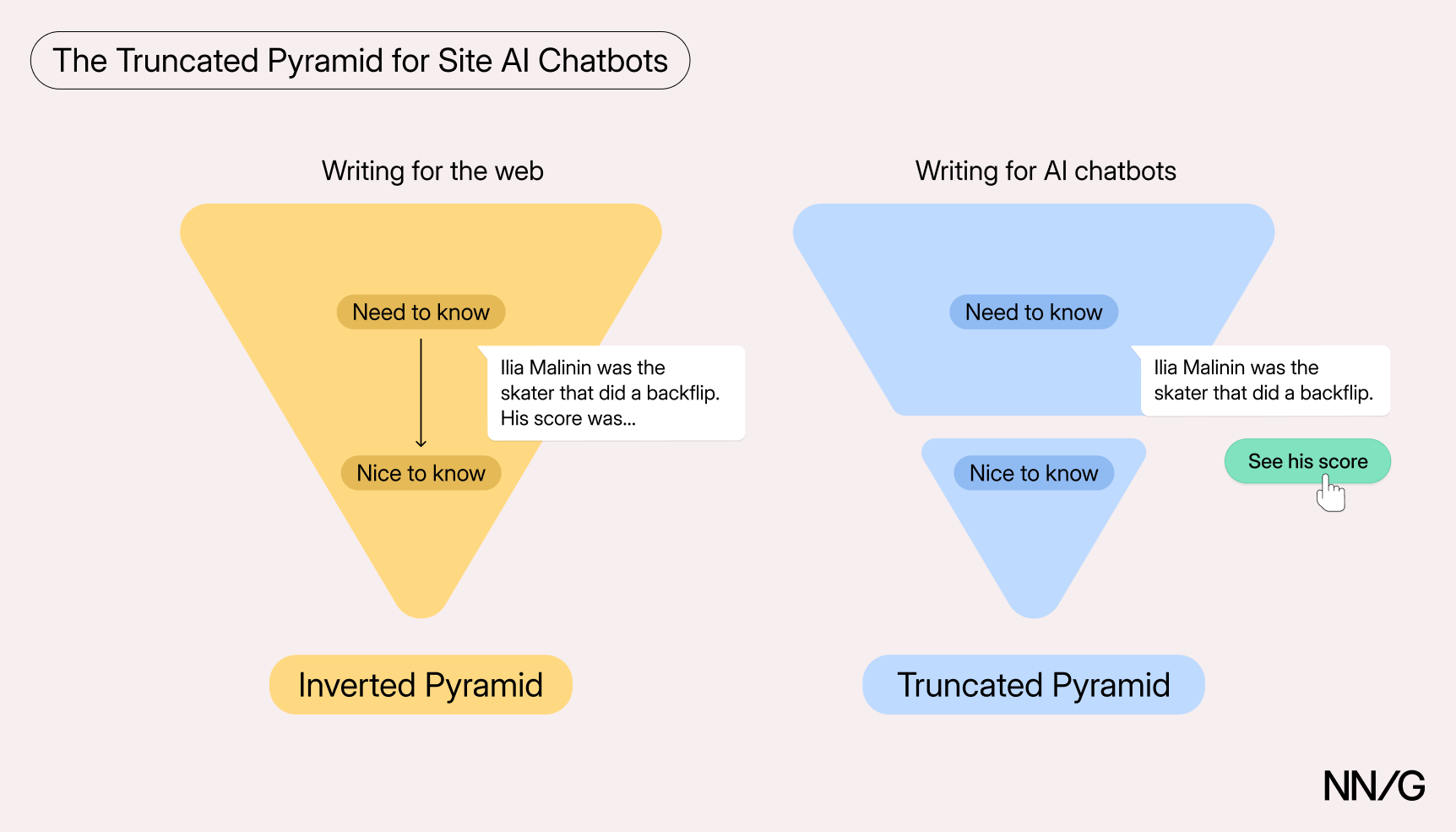

The Truncated-Pyramid RuleIn writing for the web, we recommend the inverted-pyramid rule: present the need-to-know information first, followed by nice-to-know details and context. For site chatbots, the inverted pyramid is not enough. In a small chat window, even well-prioritized information can still feel overwhelming.

A better model is a truncated pyramid: give only the essential answer first, then offer relevant followup prompts so users can pull additional detail on demand.

Good web writing follows the inverted pyramid. Good writing for site AI chatbots should follow the truncated-pyramid rule, where extra detail is progressively revealed through suggested followup prompts.

Good web writing follows the inverted pyramid. Good writing for site AI chatbots should follow the truncated-pyramid rule, where extra detail is progressively revealed through suggested followup prompts.

A useful rule of thumb: The first answer should include only what the user asked for and any caveats needed to keep that answer accurate. Everything else — background context, edge cases, process steps beyond the immediate next one — belongs behind a followup prompt. This concept is similar to progressive disclosure. If a piece of context is not essential, the bot should leave it behind a suggested followup prompt that interested users can click on.

Although this recommendation sounds simple, implementing it well can be challenging. A site chatbot must know which types of information are essential and which are extra. That is why research with your users is so important.

When It Can’t Help, Say So PlainlyWhen a chatbot cannot help, do not pad the gap. In our study, there were several situations in which AI chatbots could not actually help users. Unfortunately, instead of giving a simple response, some tried to be helpful by offering advice or explanations that users did not need or want.

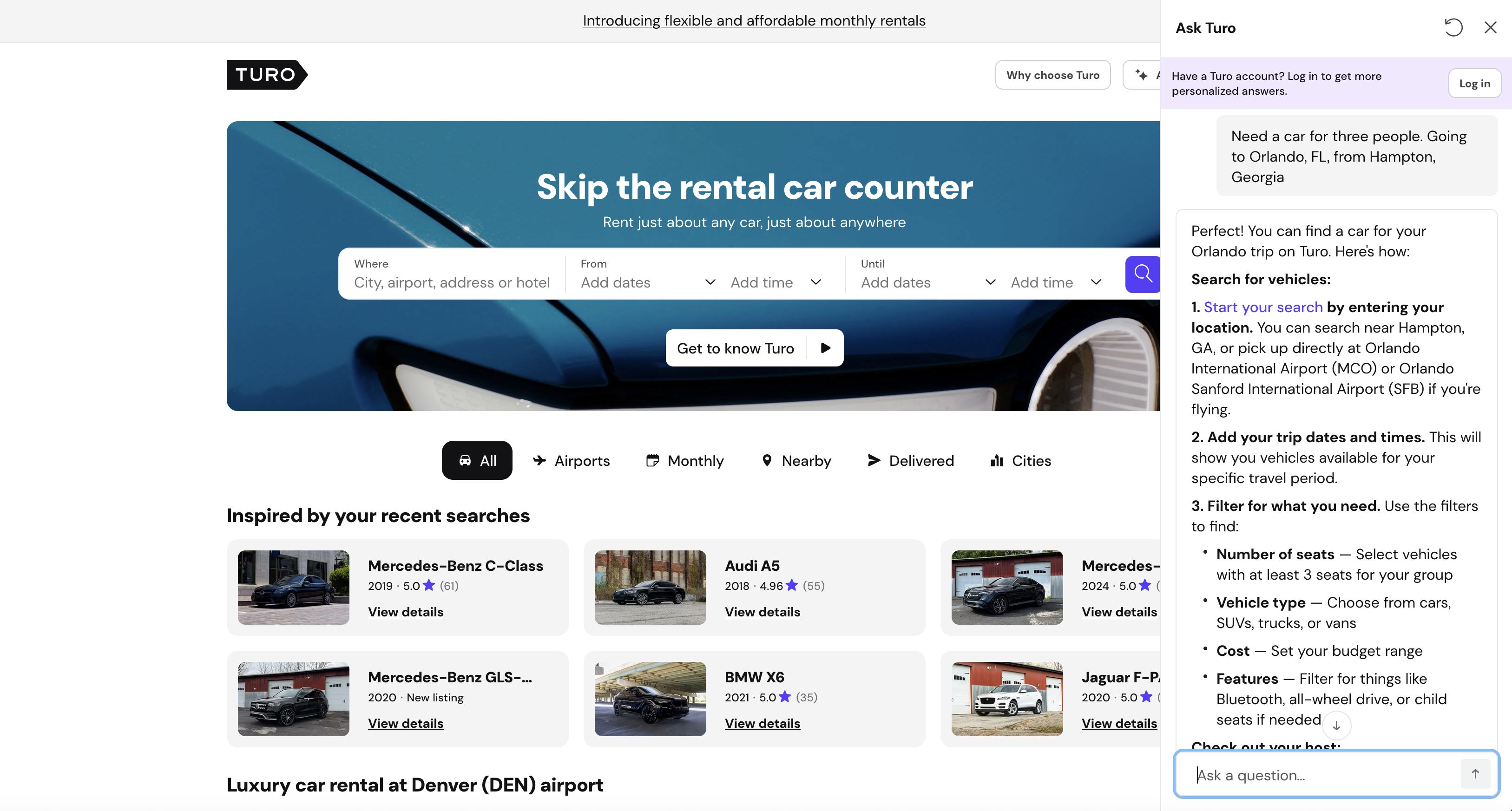

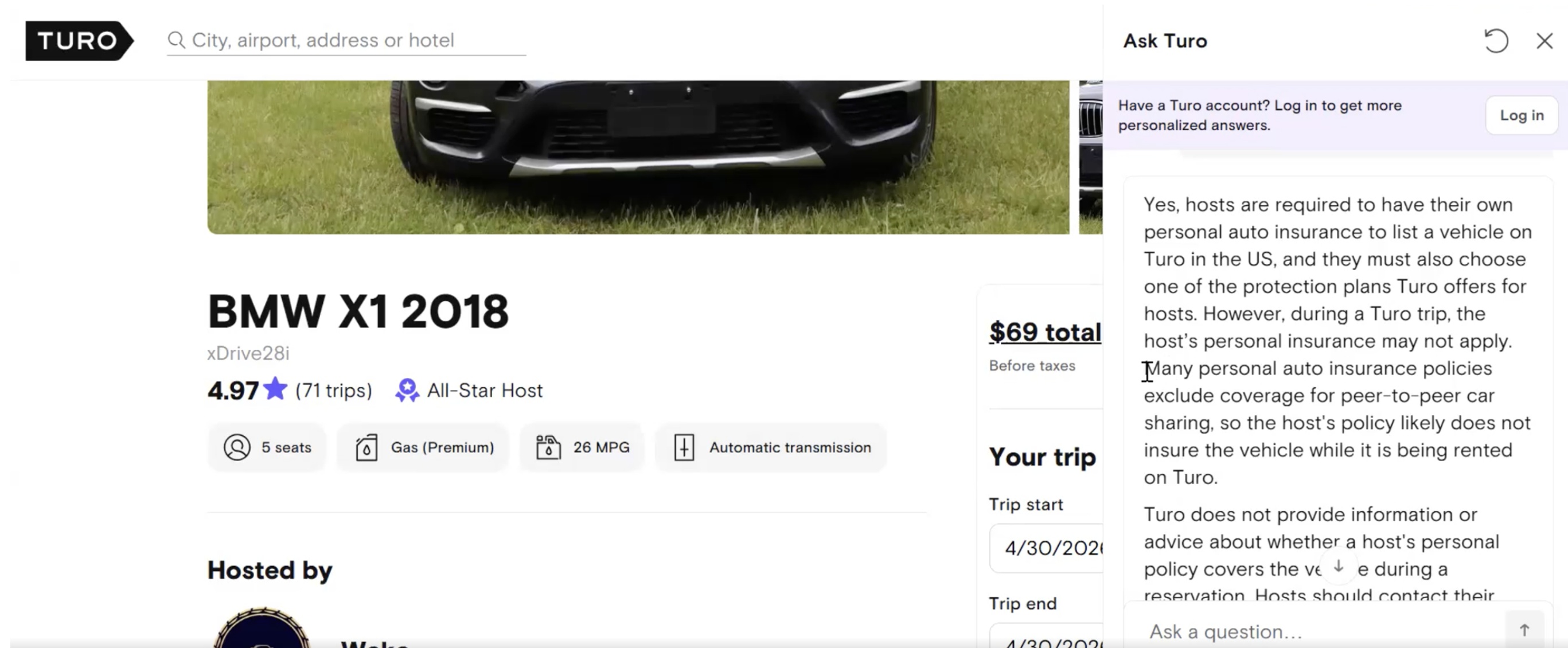

Example: TuroOne participant used the AI chatbot on Turo, a peer-to-peer car-rental company, to help him find cars for a planned trip. He did not realize that Turo’s chatbot could not actually search for cars. He submitted his request and received a lengthy response explaining how to use the site to search.

He scanned the response and mistook the chatbot’s description of available filters for a request for more trip requirements, which he then provided in chat. When he received another similar response instructing him to search manually, he finally realized the chatbot could not help him and complained about the time he had wasted.

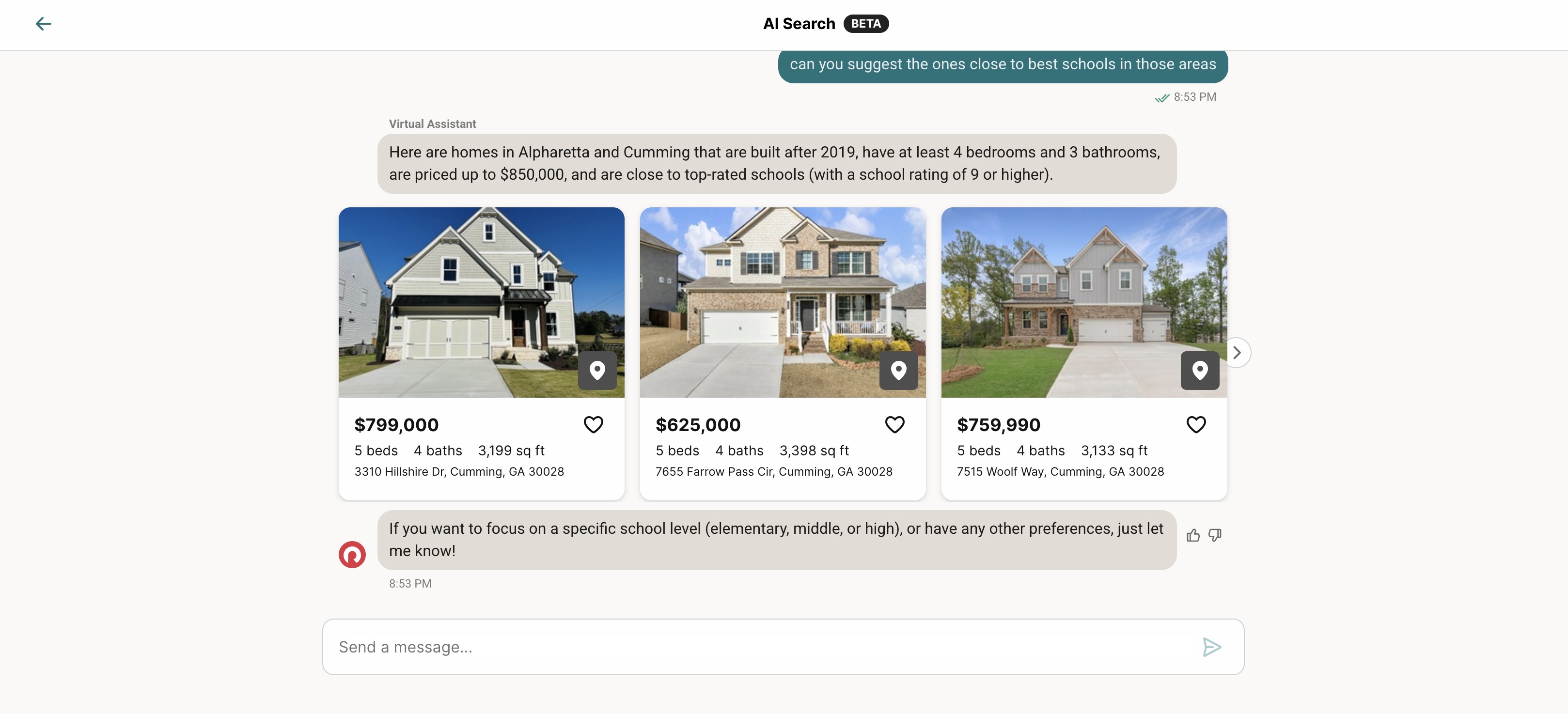

❌ Ask Turo couldn’t find cars for users but didn’t clearly say so from the outset. This resulted in a misunderstanding.

Example: Redfin

❌ Ask Turo couldn’t find cars for users but didn’t clearly say so from the outset. This resulted in a misunderstanding.

Example: Redfin

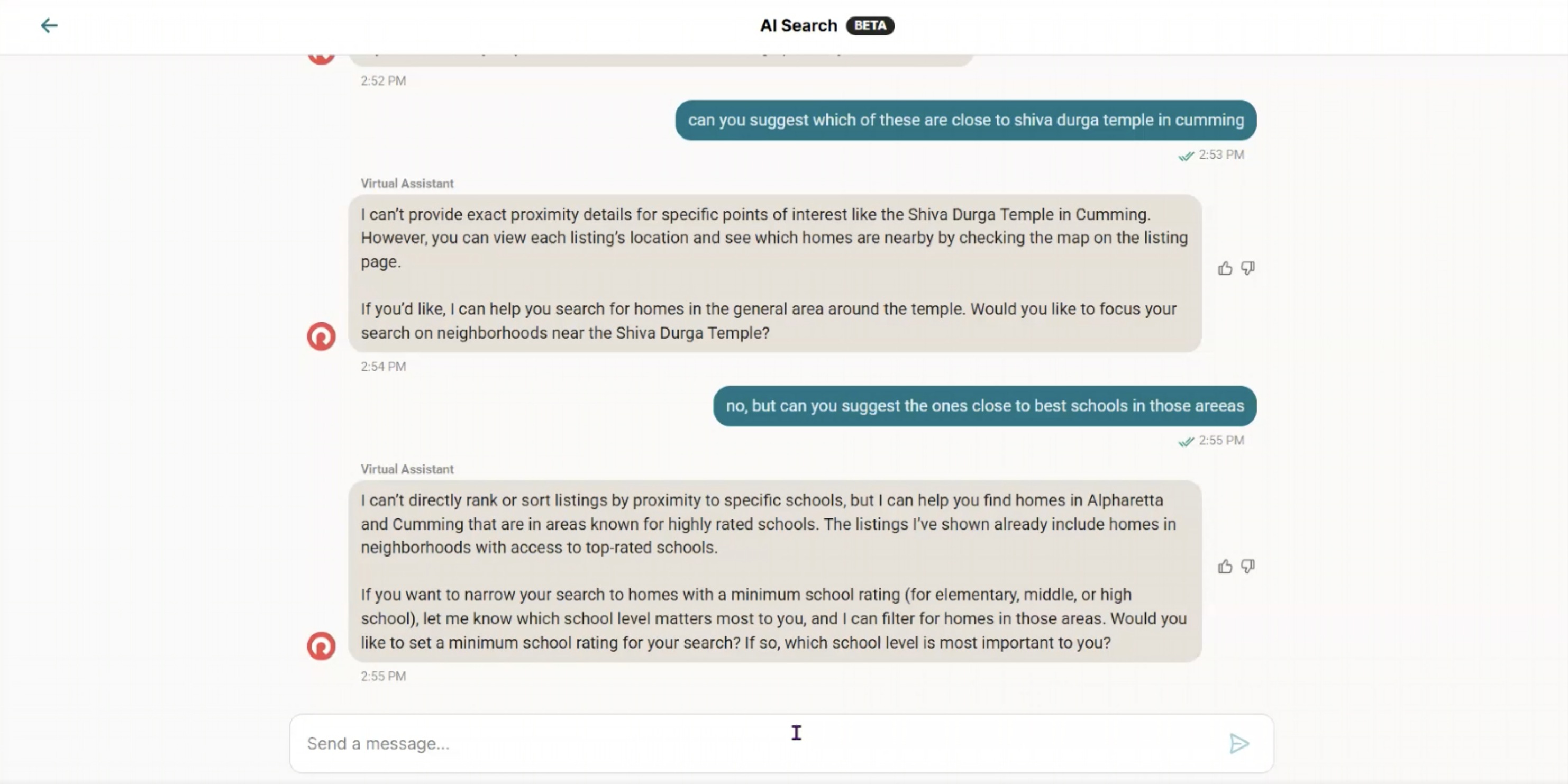

A similar issue occurred with Redfin’s smart search. When a participant asked for houses near the best schools in the area, the AI chatbot responded that it couldn’t rank or sort houses, but it could filter them by minimum school rating. Since this suggestion appeared in the second paragraph of the response, the participant missed it.

❌ Redfin’s response began by saying it could not rank or sort listings. In the second paragraph, it mentioned that it could filter homes by school rating. That may have been helpful, but the participant never got that far.

❌ Redfin’s response began by saying it could not rank or sort listings. In the second paragraph, it mentioned that it could filter homes by school rating. That may have been helpful, but the participant never got that far.

When the participant received the response, she read the first sentence, understood that the chatbot could not help, and stopped. She felt the response was too long for what amounted to “sorry, I can’t help with that.”

“I do not want, like, to beat around the bush. You say you can't do it. Okay, that's fine (....) I do not like (...) long explanations (...) because I do not have the patience to read through everything.

Since our study, Redfin’s AI chatbot appears to have changed its approach. The chatbot now automatically applies a school-rating filter when users ask for houses near the best schools. Users can prompt to adjust the filter if needed.

✅ A newer version of Redfin’s AI chat feature automatically applied a school-rating filter when users asked for houses near the best schools.

Prioritize Specific Answers over Generic Ones

✅ A newer version of Redfin’s AI chat feature automatically applied a school-rating filter when users asked for houses near the best schools.

Prioritize Specific Answers over Generic Ones

Users want answers, not just responses. Participants were disappointed when they were told to review other pages or given vague responses. Where possible, ensure bots have access to the appropriate information so that they can answer users' questions directly, rather than sending them elsewhere.

For example, Turo’s AI chatbot, Ask Turo, seemed to lack information on how much protection plans cost or what the host’s insurance covered. When a participant asked about the protections included and whether the host’s policy might cover him during his booking, he received vague, noncommittal replies and instructions to check his own insurance, the host’s policies, and the protection plans on the booking page.

After reviewing the responses, the participant complained that they felt generic and gave him “case-by-case vibes,” which led him to prefer speaking to a person instead.

❌ One participant trying to understand Turo’s protection plans found Ask Turo’s responses vague and generic.

❌ One participant trying to understand Turo’s protection plans found Ask Turo’s responses vague and generic.

Specific responses have another advantage: they are often shorter. Generic answers usually have to account for multiple scenarios, which makes them longer. That was true of Ask Turo. The participant had to work through several paragraphs of text and still felt he had not gotten a clear answer.

In some situations, it might not be possible to provide all information to a bot. In this case, providing helpful ranges or estimates can be useful. The table below shows a real generic response from Ask Turo and an edited, specific version.

Example prompt: I want to borrow this car for 2 weeks starting on Monday. How much would each of these protection plans cost?Generic response: The exact cost of each protection plan for your 2-week trip depends on the specific car and dates you select. Turo will show you the price for each plan during checkout, and the protection cost is added as a separate line item. Each plan has a different price and coverage level.

To see the cost for your trip:

Select your car and trip dates in the Turo app or website. At checkout, you’ll see the available protection plans and their prices for your booking. You can find more info on the plan details page.Specific response:

I can’t see the car you’re looking at, but I can give you some average ranges. Costs vary by car and dates.

Daily ranges:

Premium: $25–60 per day Standard: $10–$30 per day Minimum: $5–$15 per dayAverage cost for two weeks:

Premium: $595 Standard: $280 Minimum: $140 To see the exact cost, select the car and trip dates, and enter your age on the booking page.Scouting America’s chatbot, Scoutly, provided a specific response to one of our participants who asked how much he should expect to pay to get his daughter started as a scout. The table below shows his prompt and Scoutly’s reply.

User’s prompt: Approximately how much does it cost to get going, including all the incidentals?Scoutly: Here’s a breakdown of the estimated startup costs:

National Registration Fee: $85 Uniform: $50-$100 (depending on size and program Local Troop Dues: Approximately $100 per year Initial Gear/Supplies: $50-$150Total Estimated Startup Costs: $300-$450

Additional notes: Costs can vary by troop. Some troops offer financial assistance. Uniform banks can help reduce initial expenses. Gear can be accumulated over time.When the participant received the answer, he understood that some of the information was an estimated range, but he appreciated that the bot provided the range and gave him an overall estimated cost.

“That's a good answer. I like that it did the math for me, and it's being realistic. It can't give me an exact number, so it's giving me a range (....), so that's a pretty useful answer.”

ConclusionThe chatbots users trust and return to are the ones that treat each interaction as a quick transaction — and deliver on it. That means resisting the temptation to be helpful in ways users didn't ask for: extra context, pleasantries, exhaustive detail. Every word in a chatbot response should earn its place. Teams that regularly audit their chatbot responses against that standard, and test with real users, will be the ones that get it right.

Comments (0)