Nicolle Fuller / Sayo Studio

Nicolle Fuller / Sayo Studio

Brains and black holes intrigue Cecilia Garraffo in equal measure.

Both entities caught Garraffo’s attention back in 2010, when she traveled from Buenos Aires, Argentina, to work at Brandeis University. She was studying extreme gravitational fields, but she was curious about neuroscience, too. So she joined the school’s computational neuroscience group.

There, she came upon the concept of neural networks. These aren’t webs of cells in a human brain, though they are in some cases meant to mimic them. Neuroscientists can study the brain’s complex inner workings using layers of mathematical functions called neurons. Similar to real neurons, these artificial neurons receive and send data (often to and from other neurons) as part of a larger network.

Such neural networks were already an integral part of the fast-growing field dubbed artificial intelligence (AI). Rather than write numerous lines of code to tell a computer exactly what to do in individual situations, a programmer can, in a few carefully calibrated keystrokes, design a neural network that can handle a variety of scenarios without being told exactly what to do. Fine-tuning is required, which usually includes feeding the network a whole lot of training data from which it “learns.” But once trained, a neural network becomes a tool that can analyze data on vast, even inhuman scales.

In astronomy, that capability isn’t just nice to have, Garraffo argues — it’s a must. The Vera C. Rubin Observatory in Chile, the Square Kilometre Array in Australia and South Africa, the global Event Horizon Telescope, and the Euclid space telescope are just some of the observatories that already are or soon will be producing more data than humans can handle.

“We’re observing hundreds of supernovae a year now,” says Garraffo (currently at Center for Astrophysics, Harvard & Smithsonian). But with Rubin, “we’re going to observe 1 million a year.” Following up on most of them isn’t an option; astronomers must be particular. But choosing the most interesting ones requires looking at them all. “They have to have an algorithm that says, ‘This is not only an explosion, it is an anomalous one,’” she adds.

Garraffo continued to study theoretical physics, cosmology, and stellar astrophysics — not neuroscience. But she never lost her fascination with neural networks. In 2023, she founded AstroAI, a multidisciplinary group of computer scientists and astronomers, and the first institute dedicated to the development of AI for astrophysics.

It’s unlikely that there are many readers of this magazine who don’t regularly encounter AI in some form, whether in Google’s AI search summaries, online shopping recommendations, or daily activities such as banking. But the technology’s adoption in astronomy has been slow in comparison to its eager use in commercial applications.

Astronomer and data scientist Josh Bloom (University of California, Berkeley) champions AI but understands the need for caution. “We are going to have to put a tremendous amount of computational time and people time into this,” he said at a colloquium focused on AI-human interactions. “Without a guaranteed result, it’s dangerous.”

But the rewards are worth those risks, Garraffo believes. “We’re missing out on a lot of improvements, developments, and very powerful techniques that we’re not using,” she says. “Not having access to these methods is similar to not having had access to a computer when computers started.”

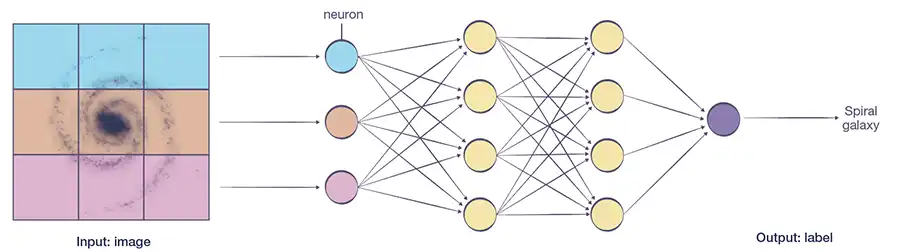

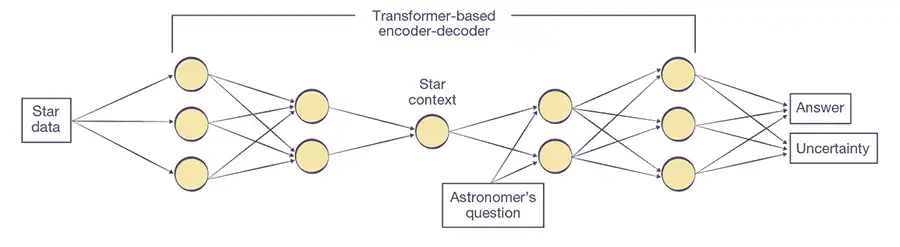

What Is a Neural Network? In this simplified diagram of a neural network, an image is divided into pixels, which are fed into a network of nodes, or neurons, that’s been previously trained on labeled images. Each neuron performs a calculation based on the input it receives. Its output then becomes the input to the next neuron. In this case, the result is a classification of the galaxy’s type.

In this simplified diagram of a neural network, an image is divided into pixels, which are fed into a network of nodes, or neurons, that’s been previously trained on labeled images. Each neuron performs a calculation based on the input it receives. Its output then becomes the input to the next neuron. In this case, the result is a classification of the galaxy’s type.Although astronomers employ many forms of machine learning (see the December 2017 issue of Sky & Telescope), neural networks have recently experienced a real revolution.

To understand this complex model at its simplest, let’s consider what’s involved when you’re deciding which book to read next. You’ve read many books, and you know what you like. So when evaluating new books, you might make some general distinctions, such as genre — perhaps you’re partial to science fiction. You might also evaluate more specific properties, such as preferring a certain author. Some of those preferences might have a stronger weight than others: Even if you like the author Ursula K. Le Guin, for example, you might not mind branching out to other writers.

Supervised neural networks function in a similar way. Here, supervised refers to the learning process. The network learns how to weight preferences by testing against a large set of labeled-by-humans data (your reactions to previously read books) and refining the properties of the network as it goes. Each neuron of the network becomes sensitive to some property of the preferred books. By testing its guesses against the labeled data, the network adjusts how strongly each neuron responds. Now, faced with new data, the network can answer the question, “Should I read this book next?”

There’s one key difference that makes this an imperfect analogy: In neural networks, no one assigns neurons to specific jobs. There’s no preset “Le Guin” neuron, for example. Individual neurons in a network often have simple tasks, and it’s not always clear how those tasks tie to the overall goal. It’s only on a collective level that the network determines broader concepts, such as whether this is a book you’d like or whether a galaxy is a spiral. Both philosophically and scientifically, it’s still debated whether a neural network can perceive these higher-level concepts the way a human does. Understanding why the network behaves the way it does, and in turn how machines learn what they learn, has been a challenge.

It’s not an insurmountable one, though. To shed light on the “black box” problem, researchers are working to interpret neural networks, to better understand which choices they’re making and why. They can even assess the certainty of any given decision — and that’s key when applying AI to science.

AI for AmateursProfessional astronomers aren’t the only ones making use of AI. See the November 2025 issue of Sky & Telesocope, for a summary of the use of AI in amateur astrophotography.

Follow the CrowdLarge data sets aren’t a new problem. Already at the turn of this century, professional astronomers realized there simply weren’t enough of themselves to classify every galaxy in images pouring in from the Hubble Space Telescope’s deep fields and the Sloan Digital Sky Survey. Fortunately, humans are pretty good at sorting galaxies by appearance, as long as enough of them participate.

Enter Galaxy Zoo and the crowdsourcing platform that hosts it, Zooniverse. Launched in 2007 (the same year the first iPhone came out), Zooniverse has by now hosted hundreds of projects, with people signing up by the millions to participate. Over almost two decades, those volunteers have classified almost 1 billion galaxies and other objects.

But some data sets are beyond what even crowdsourcing can do. The Euclid space observatory, for example, is observing one-third of the infrared sky in Hubble-like resolution over the next six years, doubling back to image select fields even more deeply (see the September 2025 issue for more on Euclid). A decade before Euclid launched, astronomers knew the data volume would pose a challenge. In 2013, Galaxy Zoo lead Karen Masters (Haverford College) calculated that, based on past projects, citizen scientists would need some 70 years to classify just the well-resolved examples of the 1.5 billion galaxies that the space telescope was projected to find.

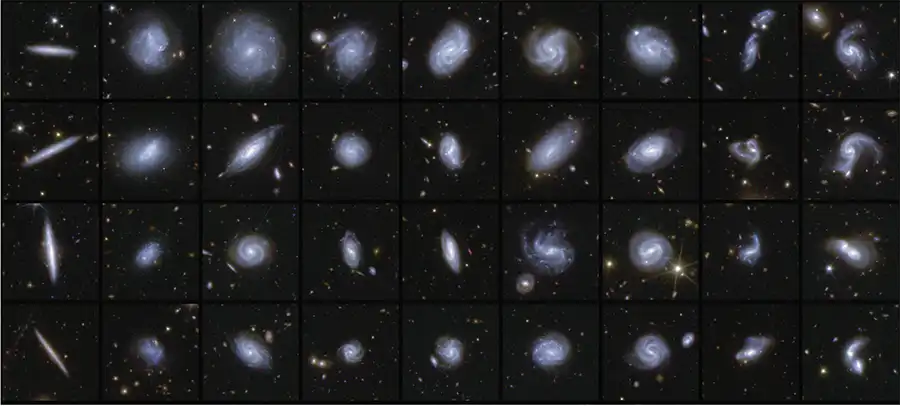

Nevertheless, citizen scientists contributed. Post-launch, the Euclid team created a short-lived project in which Zooniverse denizens helped classify the iffier-looking galaxies in preliminary data. Mike Walmsley (University of Toronto) used these labeled data to train a network named ZooBot.

For Euclid’s first data release, ZooBot classified 380,000 galaxies “on the fly,” as part of the pipeline that processes the telescope’s images after they’re downlinked to the ground. The AI model even notes whether a galaxy hosts a central bar or has tidal tails. Importantly, ZooBot includes a measure of each classification’s uncertainty.

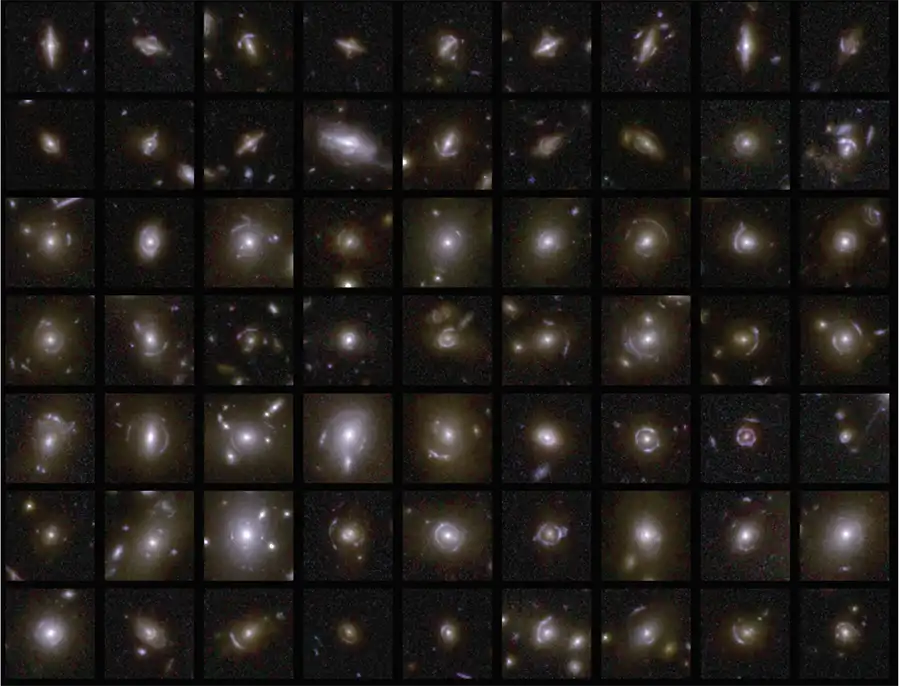

Shape Sorter This image shows examples of galaxies of different shapes, released as part of Euclid’s first data set. The release includes a detailed catalog of more than 380,000 galaxies, classified by ZooBot according to features such as spiral arms, central bars, and tidal tails that infer merging galaxies.

Shape Sorter This image shows examples of galaxies of different shapes, released as part of Euclid’s first data set. The release includes a detailed catalog of more than 380,000 galaxies, classified by ZooBot according to features such as spiral arms, central bars, and tidal tails that infer merging galaxies.“It’s a success story on how you develop and deploy AI into the workflow,” said Walmsley’s collaborator Marc Huertas-Company (Institute of Astrophysics of the Canary Islands, Spain) while speaking at an AstroAI conference in July 2025. “It’s not that we took 70 years. It took zero months. . . . This would have been unbelievable 10 years ago.”

But applying AI to citizen-labeled data sets won’t always improve the results.

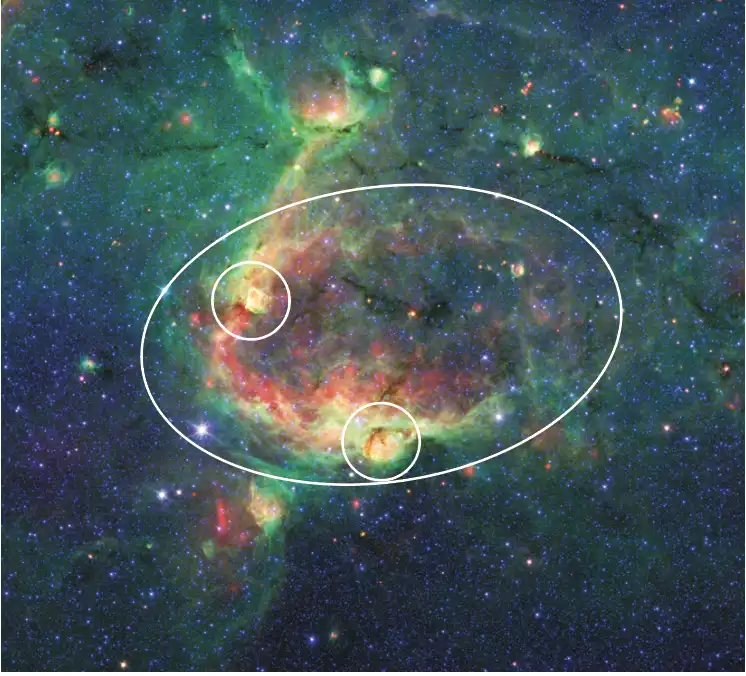

The Milky Way Project was another Galaxy Zoo initiative, launched in 2010, in which users tagged “bubbles” in infrared data. The winds and radiation from massive newborn stars heat and inflate bubbles in the gas that surrounds them. Back then, finding the myriad shapes these bubbles could take in 2D images was still beyond what computer algorithms could do. So in 2012, the Milky Way Project trained 35,000 volunteers to find more than 5,000 bubbles in data from the Spitzer Space Telescope.

Bubbles Citizen scientists with the Milky Way Project cataloged bubbles blown by massive newborn stars, such as the ones in this infrared image (marked by white ovals) from the Spitzer Space Telescope.

Bubbles Citizen scientists with the Milky Way Project cataloged bubbles blown by massive newborn stars, such as the ones in this infrared image (marked by white ovals) from the Spitzer Space Telescope.Last year, a team led by Toshikazu Onishi (Osaka Metropolitan University, Japan) reported that they’d trained a neural network on the Milky Way Project’s data. Testing their model on a small patch of sky, the team found that it identified 98% of the same bubbles as the citizen scientists. Then, when unleashed on a broader region of space, the AI model discovered twice as many bubbles as humans did. Only, most of those new objects aren’t actually bubbles.

Matthew Povich (California State Polytechnic University, Pomona), who led the Milky Way Project a decade ago, glanced through a few of the 1,413 new objects. Many, he says, are instead small yellow circles that represent an intermediate stage of massive star formation. Citizen scientists found these features, too, and named them “yellowballs.” The AI also flagged what Povich calls “random fluff” and, in one memorable thumbnail image, the Pillars of Creation. (The pillars are a site of star formation, but they’re not a bubble; they’re inside one.)

There are good reasons that finding new bubbles is a difficult task. Even for humans, bubbles are more difficult to identify than spiral galaxies. Spirals look like spirals whether images are fuzzy or sharp, but bubbles may change appearance depending on image resolution.

Or, it could simply be that there are no new bubbles to find: Earlier attempts using a different type of machine learning — a bubble-finding algorithm named Brut — found that the Milky Way Project’s scouring of the infrared data was mostly complete.

Interpretability: What Does It All Mean?Even as humans still outcompete AI in select cases, it’s appealing to take humans out of the labeling loop. Labeling data is time-consuming and limiting. A workaround is to let the data points label themselves in unsupervised learning.

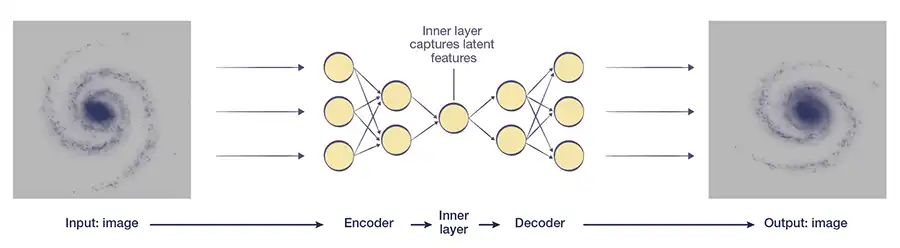

One way to do this is by using a particular neural network called an auto-encoder. In this kind of network, data pass through multiple layers of neurons collectively known as an encoder. Each layer typically contains fewer neurons than the last, boiling down the data to the most fundamental features. Then, the process runs in reverse: A multi-layered decoder expands on those features to reproduce the original data. If the model found the right fundamental properties to describe the data, then the input and output should look the same.

Humans do something similar on a regular basis. Predicting what comes next in “2, 4, 6, 8, 10, 12, . . . ” is easy because we recognize the latent pattern: even numbers. Scientists do it, too: If all we know about a star is its temperature, then we can apply a single physics equation (Planck’s law) to describe the general shape of that star’s spectrum.

Auto-encoders excel at finding latent patterns. The data points themselves tell the neural network what parameters are important — even though the AI isn’t trained on labeled data. Such models can learn what cats look like in an unsupervised way — that is, without being told which images show cats — and then go on to generate images of cats on demand.

In commercial settings, that’s good enough. But that kind of reconstruction isn’t sufficient for science.

“For us, that’s not what we want,” Garraffo says. “We don’t want to merely reproduce the universe. We want to understand it.” If the parameters the network deems important don’t have an obvious meaning — and they all too often don’t — then astronomers must interpret those parameters to understand how they tie to real physics.

In this diagram of an auto-encoder, an image passes through simpler and simpler layers of neurons, which encode the data into a few parameters. Then the decoder reconstructs the data. Comparing the input and output ensures that the network has captured the data’s fundamental features.

In this diagram of an auto-encoder, an image passes through simpler and simpler layers of neurons, which encode the data into a few parameters. Then the decoder reconstructs the data. Comparing the input and output ensures that the network has captured the data’s fundamental features. As a proof of concept, graduate student Ethan Tregidga (then at Center for Astrophysics, Harvard & Smithsonian) and members of the AstroAI collaboration applied auto-encoders to stars orbiting black holes. In these X-ray binaries, the black hole siphons off the star’s outer layers. That gas heats up as it spirals in toward the black hole, releasing high-energy radiation as it goes. The processes involved leave fingerprints in the X-ray spectrum that astronomers capture.

To make sense of the spectra, and to do so in an expedient way, Tregidga employed an auto-encoder. He started backwards, working first with the decoder. Using real, physical quantities, he trained it to create a set of X-ray spectra for various parameters. Then Tregidga froze the decoder, disabling further changes, before hooking it up to the encoder. The network compared the parameters that the encoder was finding against the parameters that the decoder had learned, essentially calibrating itself.

“We don’t want to merely reproduce the universe. We want to understand it.”

Cecilia GarraffoFinally, Tregidga turned the whole thing loose on real data. With a processing speed 2,700 times faster than traditional software, Tregidga’s AI model can now derive physical meaning from thousands of X-ray spectra at a time.

There are countless examples of one-off methods like this one, in which researchers have successfully applied a novel AI method to a unique scientific problem. But some astronomers are dreaming bigger.

One Model to Rule Them AllIn recent years, revolutionary advances in AI have enabled the development of large language models, such as OpenAI’s ChatGPT. These train on large fractions of the internet and then offer users answers for just about any question. AI chatbots are capable of taking on everyday, mundane tasks or even quite creative ones. (Note: ChatGPT did not write a single sentence of this article.)

ChatGPT is possible because of a type of neural network introduced in 2017 called a transformer. Given enormous quantities of data to train on, transformers learn to give more attention to relevant aspects in the data. As a result of this advance, we now have generalized foundation models — of which ChatGPT is only one — that can just as quickly answer “What’s the weather today?” as “What is Planck’s law?”

Astronomers are employing similar techniques using the languages of physics and math. One example is the “proto-foundation model” for stars that Henry Leung and Jo Bovy (then both at University of Toronto) created.

“Me and Henry, we got very excited about the kinds of technologies behind large language models,” Bovy says. “But they didn’t have any way of taking into account scientific data directly, the way we do when we analyze astronomical data.”

So they set about doing just that. Using a transformer-based structure, Leung and Bovy built a neural network that can take on a variety of tasks that it wasn’t specifically designed to do. The model trained on data from the European Space Agency’s Gaia space telescope, which measured brightnesses, spectra, and motions of more than 1 billion stars in the Milky Way.

The proto-foundation model that Bovy and Leung created passes data from the Gaia mission through a neural network that’s structured as a transformer-based encoder-decoder.

The proto-foundation model that Bovy and Leung created passes data from the Gaia mission through a neural network that’s structured as a transformer-based encoder-decoder. “[We] put in as little physics as we could,” Bovy adds, “because we wanted to keep it very broad and see what it could just learn from the data.”

The duo also added extra layers of neurons to evaluate the distribution of possible results, enabling the model to measure the certainty of its answers. This capability proves useful when researchers ask the AI a physically impossible question.

When one asks a large language model a question it doesn’t know the answer to, it may hallucinate an answer that has no basis in reality. For example, when asked “how many a’s are in strawberry,” a past version of ChatGPT answered: “The word ‘strawberry’ has 2 letters ‘a’.” Its answer is wrong because large language models recognize patterns better than they execute calculations. (When queried about why its answer was incorrect, the chatbot offered a reanalysis, a correct answer, and an apology.)

However, Leung and Bovy’s proto-foundation model was designed to answer questions not with confidence but with qualifications. “Our take has been that [hallucination] isn’tsuch a problem in scientific data,” Bovy explains, “as long as we also predict the uncertainty.”

For example, he and Leung asked their model to generate a spectrum for a star with a combination of temperature and surface gravity that doesn’t exist in nature. “Our model will give you the spectrum of this star,” Bovy says. “But it will also say, ‘This is extremely uncertain, because I don’t really know what I’m doing.’” The model indicates its low confidence in the answer by providing very large error bars.

Leung and Bovy’s model is far from the first attempt to build a foundation-type model — multiple teams of astronomers have released more than a dozen of them over the last two years. In fact, Walmsley’s ZooBot is a foundation model that can transfer previous learning to new tasks with minimal retraining. Using a tiny sample of just 200 ring galaxies that citizen scientists had identified, ZooBot found 10,000 more in data from the Dark Energy Camera Legacy Survey.

Through a Lens Astronomers detected 500 new gravitational lenses in Euclid data using the ZooBot model to do a first pass through the data, followed by citizen-science inspection and expert vetting and modeling. Euclid is expected to capture 100,000 such lenses by the end of its mission.

Through a Lens Astronomers detected 500 new gravitational lenses in Euclid data using the ZooBot model to do a first pass through the data, followed by citizen-science inspection and expert vetting and modeling. Euclid is expected to capture 100,000 such lenses by the end of its mission.Such successes don’t mean citizen science is going away — far from it. “We can now use the new labels Galaxy Zoo volunteers are creating to quickly build specialized models,” Walmsley wrote in his blog. “Every label is now more valuable, not less.”

As powerful as foundation models may be, they are not necessarily the best tools for a given problem. It’s also worth noting that their use remains controversial. The broad training they receive makes it easy for scientists to adapt them to different tasks, but adapted models inherit defects in the “parent” model. And of course, with ease of use comes the potential for misuse.

The Many Faces of AIAt last year’s AstroAI conference, participants voiced excitement about AI’s potential to revolutionize the way astronomy is done. Besides analyzing data, AI tools help astronomers on a daily basis, whether in writing code or scientific papers.

But the true excitement lies in the unknown. AI technology may enable new approaches or interpretations of astronomical data that simply weren’t possible before. “There are things that can only be discovered when someone looks at enough scales simultaneously, and that might be more scales than a human can comprehend,” says astronomer and machine-learning researcher Philipp Frank (Stanford).

But even as AI accelerates the processes that fuel astronomy, it’s generating concern, too. Scientists at the conference pointed out that astronomers risk losing touch with their data if they don’t examine celestial objects individually and instead focus solely on ensembles. It’s not always easy to see when populations don’t represent reality.

“Things can be intrinsically rare in your data set because they’re intrinsically rare in the universe — or they can be rare because they’re hard to find,” one AstroAI participant pointed out. “And then, when you train on that data set, you end up with an algorithm that has learned that bias.” Ferreting out biases can be difficult, all the more so when we don’t know they are there.

“Humans are way, way beyond any machine.”

Philipp FrankSome concerns are even more philosophical: Do we want AI to be the ones making discoveries? “I wouldn’t want to have in my living room something that was painted by AI,” points out astronomer-turned-data scientist Viviana Acquaviva (New York City College of Technology). “I assign a value to human creativity.” Part of that value is the struggle, she says. Making mistakes is how humans learn. AI helps us find answers faster, but that increased speed may come at the cost of human experience.

Multiple participants at the conference — even those deeply involved in using AI for their research — worry that the technology will ultimately take the joy out of their work. If AI can analyze data, interpret it, and write papers, then perhaps AI can take over astronomers’ work completely. “What do we want our job to be in 10 years?” asks Acquaviva. “Do we want to know as much as humanly possible? Or do we want to preserve some of the things that we enjoy?

“You wanted AI to do your dishes, so you can write and study,” she adds, “and all that’s left for you is the dishes.”

Not everyone shares those concerns to the same degree. “There are certain caveats and things for us to maneuver around,” says Frank, “but I do think [AI] is transformative in virtually every task.”

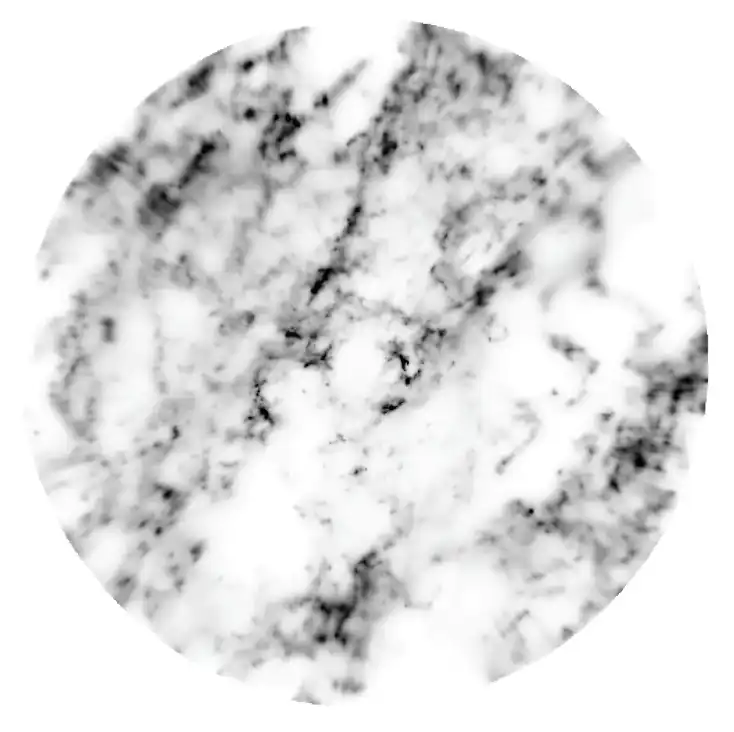

Frank is part of a team that used AI to map dust in the Milky Way. Dust tends to scatter more blue light than red light, so a star that appears redder than it ought to likely has dust in front of it. By training a neural network on a few million stars observed by the Gaia mission that remain unreddened by dust, researchers generated artificial spectra that are high-resolution, dust-free, and accurate for a star’s given spectral type. Then, by comparing Gaia data against generated spectra for millions of stars at a time, Frank and collaborators obtained a measure of how much dust is out there. The result? A dust map that extends 4,000 light-years out from the Sun.

Between the Stars Astronomers created this dust map around the solar neighborhood using AI. Darker regions are denser with dust. The Sun is at center and the galactic center toward the right; the map is 8,000 light-years wide.

Between the Stars Astronomers created this dust map around the solar neighborhood using AI. Darker regions are denser with dust. The Sun is at center and the galactic center toward the right; the map is 8,000 light-years wide.“I would say it’s AI-assisted, human-in-the-loop work, in the sense that the humans decided what to look at and why, but a lot of the modeling is then machine-driven,” says Frank. “We tried to keep the fun — but then, no human is able to process a million stars.”

Frank sees this combination of human guidance and AI assistance as key to the future. AI, after all, can understand data in several different forms, scales, and dimensions, and it can process massive amounts of data to learn something new. But it’s humans who excel at grasping new ideas using only a few examples.

“Humans are incredible at getting these extremely minimalistic, extremely expressive models,” Frank says. “Humans are...way, way beyond any machine.”

The use of AI continues to expand, and the roles it plays in our everyday lives are still evolving. But from Garraffo’s perspective, the developments in astronomy have been overwhelmingly positive. “We are doing it for something that is good,” she says.

These tools will free up astronomers’ time to do astronomy, she argues — and there will be plenty to do. The wave of cosmic data is still coming, she says. “I’m freaking out, we should all be freaking out! We’re not ready.” With AI, she adds, “we’re going to make so many more discoveries.”

This story originally appeared in the April 2026 issue of Sky & Telescope.

Comments (0)